These are the ramblings of Matthijs Kooijman, concerning the software he hacks on, hobbies he has and occasionally his personal life.

Most content on this site is licensed under the WTFPL, version 2 (details).

Questions? Praise? Blame? Feel free to contact me.

My old blog (pre-2006) is also still available.

See also my Mastodon page.

| Sun | Mon | Tue | Wed | Thu | Fri | Sat |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 | 31 |

(...), Arduino, AVR, BaRef, Blosxom, Book, Busy, C++, Charity, Debian, Electronics, Examination, Firefox, Flash, Framework, FreeBSD, Gnome, Hardware, Inter-Actief, IRC, JTAG, LARP, Layout, Linux, Madness, Mail, Math, MS-1013, Mutt, Nerd, Notebook, Optimization, Personal, Plugins, Protocol, QEMU, Random, Rant, Repair, S270, Sailing, Samba, Sanquin, Script, Sleep, Software, SSH, Study, Supermicro, Symbols, Tika, Travel, Trivia, USB, Windows, Work, X201, Xanthe, XBee

&

&

(With plugins: config, extensionless, hide, tagging, Markdown, macros, breadcrumbs, calendar, directorybrowse, entries_index, feedback, flavourdir, include, interpolate_fancy, listplugins, menu, pagetype, preview, seemore, storynum, storytitle, writeback_recent, moreentries)

Valid XHTML 1.0 Strict & CSS

Yay! Last night (yes really night, it was 4:30 AM) I finalized and submitted the last chapter of my book. There's still a few details left, but the text of the book is out of my hands now, and the great folks at Packt publishing are now doing their magic with correcting my English, testing my code, layouting the book, and so on.

Great to have the pressure of these (repeatedly postponed) deadlines off my back, so I can finally take some time to do some long-due other stuff. Like cleaning up my desk:

Past summer, I've been writing a book, which is now nearing completion. A few months ago I was approached by Packt, a publisher of technical books, which want to add a book about sensor networks using Arduino and XBee modules to their lineup, and asked me to write it. The result will be a book titled "Building Wireless Sensor Networks Using Arduino".

I've been working on the book since then and am now busy with the last revisions on the text and examples. Turns out that writing a book is a lot more work than I had anticipated, though that might be partly due to my own perfectionism. In any case, I'll be happy when I'm done in a few weeks and can spend some time again on all the stuff I've been postponing in the last few months :-)

I originally wrote this, just under a year ago when I returned from this sailing trip. I found this draft post again a few weeks ago, being nearly finished. Since I'm about to leave on a new sailing trip next week (to France this time, if the wind permits), I thought it would be nice to finish this post before then. Even though it's a year old, the story is bizarre enough to publish it still :-)

I've put up photographs taken by me and Danny van den Akker up on Flickr.

Last year in May, I went on a 11 day sailing trip to the Isle of Wight an island off the southern coast of England. Together with my father, brother and two others on my father's boat as well as 10 other people on two more boats, we started out on this trip from Harderwijk on Thursday, navigating to the port of IJmuiden and going out on the north sea from there on Friday morning.

Initially, we had favourable wind and made good speed up to Dover at noon on Saturday. From then on, it seemed Murphy had climbed aboard our ship and we were in for our fair share of setbacks, partly balanced by some insane luck as well. In any case, here's a short timeline of the bad and lucky stuff:

- :-( Just around Beachy Head, our engine stopped running. We quickly

restarted it and carried on, but shortly after the engine stopped

again. It didn't want to start again either. In case you're wondering

- not having a working engine is a problem for a (bigger) sailing ship. Sailing into an (unknown) (sea) port is usually hard and on the open sea your engine is an important safety measure in case of problems.

- :-) Fortunately, Atlantis, one of our companion ships was only a few miles ahead (after having crossed most the North Sea through different routes, many miles apart). We sailed towards the port of New Haven, where they towed us into the port.

- :-( We diagnosed that there was a problem in the fuel supply, but couldn't find the real cause. We spent the sunday in the thrilling harbour town of New Haven, waiting for a mechanic to arrive.

- :-) On monday morning, a friendly mechanic called Neil fixed our fuel tank - it turned out there was a hose in there with a clogged filter as well as a kink near the end that prevented fuel from getting through. Neil cut off the end of the tube, fixing both problems at once and our engine ran again like a charm.

- :-( We continued towards Wight around noon, but shortly after passing No mans land Fort - guess what - our engine stopped running again.

- :-( This time we had no friends around to tow us in, but there was a Dutch ship (the Abel Tasman) nearby. We tried to get their attention using radio, a horn and a hand-held flare, but they misunderstood our intentions and carried on. We kept on sailing, but contacted the very much polite and helpful Solent Coast Guard by radio, to let them know we were coming in by sail and might needed a tow into the harbour.

- :-) After we finished our conversation with the Solent Coast Guard, the Abel Tasman hailed us on the radio and offered to tow us all the way to the harbor. With the wind dropping, we gladly accepted.

- :-) On monday evening We arrived on the Isle of Wight!

- :-) In the harbour, our engine started again (with some initial coughing). Yay for having a working engine, but without having fixed a problem, it could break down any moment. :-( We had some theories about what the problem could be, but no real confidence in any of them.

- :-) In the morning, we called for Tristan, a mechanic from the town Cowes where we were staying. He had a look at our engine, but since it was running again, there wasn't much he could do. He found a connection in the fuel line that wasn't very tight - guessing that that had allowed some air to slip into the fuel line. He tighted the connection and left us again.

- :-( Shortly after he left, we started the engine for a short refueling trip - and it stopped running again. We called for the mechanic to have another look, but he didn't show up all day...

- :-( Near the end of the day, we didn't want to wait anymore and tried to bleed out the air from our engine, thinking that was the last problem. No matter how hard we tried, air nor fuel would come through. Debugging further, we found that the fuel tank would again not supply any fuel at all. We "fixed" this by disconnecting the fuel line, blowing into it and then sucking at it (credits to Jan-Peter for sucking up a mouthful of Diesel...). Together with the diesel there came also a wad of something that looked like mud.

- :-( Previously, we didn't suspect any pollution of our fuel tank, because the looking glass in the fuel filter was squeeky clean. Now, prompted by the gunk coming out of the tank, we opened up the filter to have a more thorough look and it turned out the filter was nearly completely filled with the same muddy stuff (which turned out to be "Diesel Bug" - various (byproducts of) bacteria and other organisms that like liveing in diesel tanks)

- :-) We didn't have a working engine yet, but at least we knew with confidence what the cause of our problems was, and had been. As Danny stated it: At least it is truely broken now. All we needed now was machinery to filter our fuel and rinse the inside of the tank.

- :-( On Tuesday, we found another mechanic who couldn't help us directly, but made a few phone calls. He found someone with the exact machinery we needed. The only problem was that the machinery was in the Swanwick Marina, on the UK mainland.

:-) On Wednesday, we tried to get into contact with the mechanic in Swanwick, to see if he could come over to Wight by ferry. We weren't hopeful, so in the meantime we also found a small plastic fuel tank (normally used for larger outboard motors) that could serve as a spare diesel tank. After also finding a few meters of diesel hose, getting an extra hose barb fitted and even getting a valve for fast on-the-fly switching between tanks (Thanks to Power Plus Marine Engineering for going out of their way to help us!), we had a working engine again by the end of the day!

We weren't confident our shiny new tank to sail all the way back to the Netherlands using it, but it would be more than sufficient to get over to Swanwick to get our main tank cleaned. As an extra bonus, it would be a good backup tank in case we still had problems after cleaning.

- :-) Early Thursday morning, we headed over to Swanwick where Geoffrey and Johnny laboured all morning to get a ton of sludge out of our tank. They sprayed the fuel back in using a narrow piece of pipe to clean the stuff off the edges of our tank as good as possible.

:-( As soon as they were done, we cast off and set course to

BrightonEastbourneDoverRamsgateDunkerque,Oostende,Zeebrugge, Dunkerque. Unfortunately, the wind direction was unfavorable (and later also didn't abide by the prediction, in our disadvantage of course), so we ended up sailing for only a small bit and using our engine most of the way.We changed our destination a few times along the way, usually because we thought "We might as well go on for a bit more, the further we go now, the easier it will be for the last stretch". Near Dunkerque, we planned to go on to Zeebrugge, but the wind picked up significantly. With the waves and wind against, we were getting a very rough ride, so we stopped at Dunkerque after all.

- :-( We came into port friday after office hours, so there was no personell anymore. In the restaurant we managed to get an access card so we could get to the toilet and back again, but the card had no balance, so we couldn't even take a shower...

- :-( Early saturday morning we carried on, this time with favorable wind direction, but not enough wind to make a decent speed. Since we lost all the slack in our schedule already (we had planned to be home by saturday afternoon), we used our engine again to make some speed.

:-( Shortly after crossing the Westerschelde, our engine stopped running again (can you believe that?). We quickly found that the engine itself would run fine, but would stop as soon as you put it into gear - there was something blocking up our screw propeller. Using Danny's GoPro underwater camera we confirmed that there was indeed something stuck in the screw. We didn't want to go into the water to fix it on open sea because of the safety risk, as well as the slim chance that we'd actually be able to cut out whatever was stuck.

:-) We contacted the coast guard again (the dutch one this time), telling them we'd sail towards the Roompot locks and would probably need a tow through the locks and into the harbour. We contacted the Burghsluis harbour and found they had a boat crane and were willing to operate it during the evening, so we had our target.

- :-( Then the wind dropped to nearly zero and we just floated around the North Sea for a bit. After an hour of floating, we contacted the coast guard and asked them to tow us in all the way - we were losing time quickly and with unreliable wind, there was no way we could safely cross the northern exit channel of the Westerschelde.

:-) While floating around a bit more, waiting for the coast guard to arrive, we slowly floated nearer to a small boat that we had previously seen in the distance already. Now, and this is where the story becomes even more unlikely, this boat was running a "diver flag", meaning there was a diver in the water nearby.

We slowly approached the boat and shortly after yelling over our problem to the woman on the boat, the diver emerged from the water. She told him of our problem, he swam over and under our boat and not even 30 seconds later he emerged again carrying a fairly short but thick piece of rope that had been stuck in our screw.

We started our engine and were on our way again, suffering about a 3-4 hour delay in total.

:-) We contacted the coast guard again to call them off, but they were already nearly there. To resolve some formalities, they continued heading our way. A minute or so later, the Koopmansdansk, a super-speedy boat from the KNRM came alongside. After we gave (read: Tried to yell over the roaring of their motor) them some contact information and the gave us a flyer about sponsoring the KNRM, we both went our separate ways again.

- :-( During the night, a thunderstorm came over us. I missed most of it because I was sleeping, but Jan Peter and Danny were completely drenched and attested that it was both heavy and somewhat scary due to the lightning.

- :-) Early sunday morning we went through the locks in IJmuiden, passed through Amsterdam and the Markermeer pretty uneventful. It even seemed like I would be at home just in time to head over to Enschede for our regular Glee night with some friends.

- :-( We were running low on diesel, so we had to find a place to fuel up in Lelystad. We found a harbour that was documented to have a gas pump, but didn't have one, so wasted some time finding one...

- :-( When we got to the locks of Roggebotsluis, it took a long time for the bridge to open. After floating around for 10 minutes or so, we noticed that one of the boom barriers (slagboom) looked warped and bent. Turns out just before we arrived there, some car got locked in between the barriers, panicked, hit the barrier while backing up and then sped away past the barrier on the opposite side. A mechanic was being summoned, but being a sunday...

- :-) After a short while, the bridge controller fellow came out, pulled the boom somewhat back into shape a bit and announced they'd give it a go. Thankfully for us, everything still worked and we were on our way!

- :-) No more delays after that. Brenda picked me up in the harbour, where I took a very quick shower before we headed to Enschede. Just in time for Glee, though I started falling asleep before we were even halfway. Apparently I was exhausted...

Overall, this was a crazy journey. All the problems we had have been a bit stressful and scary at times, but it was nice nonetheless. I especially enjoyed trying get things working again together, it was really a team effort.

Also interesting, especially for me, is that we had installed an AIS receiver on-board, which allows receiving information from nearby ships about their name, position, course and speed. This is especially useful in busy areas with lots of big cargo ships and to safely make your way through the big shipping lanes at sea.

This AIS receiver needed to be wired up to the existing instruments and to my laptop, so we could view the information on our maps. So I spent most of the first day belowdecks figuring this stuff out. I continued fiddling with these connections and settings to improve the setup, running into some bugs and limitations. Knowing I might have to improvise, I had packed a few Arduinos, some basic electronics and an RS232 transceiver, which allowed me to essentially build a NMEA multiplexer that can forward some data and drop other data. Yay for building your own hardware and software while at sea. Also yay for not getting seasick easily :-p

Now, I'm getting ready for our next trip. We start, and possibly end, in Vlissingen this time (to buy a bit more time) and intend to go to Cherbourg. Let's hope this journey is a bit less "interesting" than the previous one!

I don't really have a well-defined plan for my professional carreer, but things keep popping up. A theme for this month seems to be "education".

I just returned from a day at Saxion hogeschool in Enschede, where I gave a lecture/workshop about programming Arduino boards without using the Arduino IDE or Arduino core code.

It was nice to be in front of students again and things went reasonably well. It's a bit different to engage non-academic (HBO) students and I didn't get around to telling and doing everything I had planned, but I have another followup lecture next week to improve on things.

At the same time, I'm currently talking with a publisher of technical books about writing a book about building wireless sensor networks with Arduino and XBee. I'll have to do some extensive research into the details of XBee for this, but I'm looking forward to see how I like writing such a technical book. If this blog is any indication, that should work out just fine.

For now, these are just small things next to my other work, but who knows what I'll come across next?

I was prompted to write this post after I got an email from another S270 user, thanking me for all the info I posted about these machines. He was also having power supply issues, with all the same resulting troubles I had (keyboard stuttering, USB issues, etc.).

He said he fixed this by simply replacing the notebook power supply and all his problems were gone. Interestingly I had already tried that and it didn't work for me, so we were having different problems.

Another reason for writing this post is that I actually fixed my power supply issues a few months ago. I can't really remember what prompted me too look into it again, but this time I didn't have anything to lose - I wasn't really using Xanthe for anything anymore. I opened up the casing completely, took out the mainboard to properly access the underside.

The power connector felt like it was fixed to the mainboard pretty tightly, but I put my soldering iron to it anyway. I just let the solder reflow nicely.

To my surprise, this actually worked. The power connector now powers the laptop properly, without any blinking. I suspect that the solder in one of the contacts had split and caused a flakey connection.

So, Xanthe is still idling around in a cabinet somewhere, but at least she's now usable as a backup or LARP prop sometime :-)

Last year, I got myself an Atmel JTAGICE3 programmer, in order to speed up programming my Pinoccio boards. This worked great, except that as can be expected of the tiny flatcable they used (1.27mm connector and even smaller flatcable), the cable broke within 6 months.

Atmel support didn't want to replace it, because it wasn't broken when I first unpacked the programmer, and told me to find and buy a new cable myself. Since finding these cables turns out to be tricky, and I didn't feel like breaking another cable in 6 months, I designed a converter board.

Every now and then I work on some complex C++ code (mostly stuff running on Arduino nowadays) so I can write up some code in a nice, consise and abstracted manner. This almost always involves classes, constructors and templates, which serve their purpose in the abstraction, but once you actually call them, the compiler should optimize all of them away as much as possible.

This usually works nicely, but there was one thing that kept bugging me. No matter how simple your constructors are, initializing using constructors always results in some code running at runtime.

In contrast, when you initialize normal integer variable, or a struct variable using aggregate initialization, the copmiler can completely do the initialization at compiletime. e.g. this code:

struct Foo {uint8_t a; bool b; uint16_t c};

Foo x = {0x12, false, 0x3456};

Would result in four bytes (0x12, 0x00, 0x34, 0x56, assuming no padding and big-endian) in the data section of the resulting object file. This data section is loaded into memory using a simple loop, which is about as efficient as things get.

Now, if I write the above code using a constructor:

struct Foo {

uint8_t a; bool b; uint16_t c;};

Foo(uint8_t a, bool b, uint16_t c) : a(a), b(b), c(c) {}

};

Foo x = Foo(0x12, false, 0x3456);

This will result in those four bytes being allocated in the bss section (which is zero-initialized), with the constructor code being executed at startup. The actual call to the constructor is inlined of course, but this still means there is code that loads every byte into a register, loads the address in a register, and stores the byte to memory (assuming an 8-bit architecture, other architectures will do more bytes at at time).

This doesn't matter much if it's just a few bytes, but for larger objects, or multiple small objects, having the loading code intermixed with the data like this easily requires 3 to 4 times as much code as having it loaded from the data section. I don't think CPU time will be much different (though first zeroing memory and then loading actual data is probably slower), but on embedded systems like Arduino, code size is often limited, so not having the compiler just resolve this at compiletime has always frustrated me.

Constant Initialization

Today I learned about a new feature in C++11: Constant initialization. This means that any global variables that are initialized to a constant expression, will be resolved at runtime and initialized before any (user) code (including constructors) starts to actually run.

A constant expression is essentially an expression that the compiler can

guarantee can be evaluated at compiletime. They are required for e.g array

sizes and non-type template parameters. Originally, constant expressions

included just simple (arithmetic) expressions, but since C++11 you can

also use functions and even constructors as part of a constant

expression. For this, you mark a function using the constexpr keyword,

which essentially means that if all parameters to the function are

compiletime constants, the result of the function will also be

(additionally, there are some limitations on what a constexpr function

can do).

So essentially, this means that if you add constexpr to all

constructors and functions involved in the initialization of a variable,

the compiler will evaluate them all at compiletime.

(On a related note - I'm not sure why the compiler doesn't deduce

constexpr automatically. If it can verify if it's allowed to use

constexpr, why not add it? Might be too resource-intensive perhaps?)

Note that constant initialization does not mean the variable has to be

declared const (e.g. immutable) - it's just that the initial value

has to be a constant expression (which are really different concepts -

it's perfectly possible for a const variable to have a non-constant

expression as its value. This means that the value is set by normal

constructor calls or whatnot at runtime, possibly with side-effects,

without allowing any further changes to the value after that).

Enforcing constant initialization?

Anyway, so much for the introduction of this post, which turned out longer than I planned :-). I learned about this feature from this great post by Andrzej Krzemieński. He also writes that it is not really possible to enforce that a variable is constant-initialized:

It is difficult to assert that the initialization of globals really took place at compile-time. You can inspect the binary, but it only gives you the guarantee for this binary and is not a guarantee for the program, in case you target for multiple platforms, or use various compilation modes (like debug and retail). The compiler may not help you with that. There is no way (no syntax) to require a verification by the compiler that a given global is const-initialized.

If you accidentially forget constexpr on one function involved, or some other requirement is not fulfilled, the compiler will happily fall back to less efficient runtime initialization instead of notifying you so you can fix this.

This smelled like a challenge, so I set out to investigate if I could

figure out some way to implement this anyway. I thought of using a

non-type template argument (which are required to be constant

expressions by C++), but those only allow a limited set of types to be

passed. I tried using builtin_constant_p, a non-standard gcc

construct, but that doesn't seem to recognize class-typed constant

expressions.

Using static_assert

It seems that using the (also introduced in C++11) static_assert

statement is a reasonable (though not perfect) option. The first

argument to static_assert is a boolean that must be a constant

expression. So, if we pass it an expression that is not a constant

expression, it triggers an error. For testing, I'm using this code:

class Foo {

public:

constexpr Foo(int x) { }

Foo(long x) { }

};

Foo a = Foo(1);

Foo b = Foo(1L);

We define a Foo class, which has two constructors: one accepts an

int and is constexpr and one accepts a long and is not

constexpr. Above, this means that a will be const-initialized, while

b is not.

To use static_assert, we cannot just pass a or b as the condition,

since the condition must return a bool type. Using the comma operator

helps here (the comma accepts two operands, evaluates both and then

discards the first to return the second):

static_assert((a, true), "a not const-initialized"); // OK

static_assert((b, true), "b not const-initialized"); // OK :-(

However, this doesn't quite work, neither of these result in an error. I

was actually surprised here - I would have expected them both to fail,

since neither a nor b is a constant expression. In any case, this

doesn't work. What we can do, is simply copy the initializer used for

both into the static_assert:

static_assert((Foo(1), true), "a not const-initialized"); // OK

static_assert((Foo(1L), true), "b not const-initialized"); // Error

This works as expected: The int version is ok, the long version

throws an error. It doesn't trigger the assertion, but

recent gcc versions show the line with the error, so it's good enough:

test.cpp:14:1: error: non-constant condition for static assertion

static_assert((Foo(1L), true), "b not const-initialized"); // Error

^

test.cpp:14:1: error: call to non-constexpr function ‘Foo::Foo(long int)’

This isn't very pretty though - the comma operator doesn't make it very clear what we're doing here. Better is to use a simple inline function, to effectively do the same:

template <typename T>

constexpr bool ensure_const_init(T t) { return true; }

static_assert(ensure_const_init(Foo(1)), "a not const-initialized"); // OK

static_assert(ensure_const_init(Foo(1L)), "b not const-initialized"); // Error

This achieves the same result, but looks nicer (though the

ensure_const_init function does not actually enforce anything, it's

the context in which it's used, but that's a matter of documentation).

Note that I'm not sure if this will actually catch all cases, I'm not

entirely sure if the stuff involved with passing an expression to

static_assert (optionally through the ensure_const_init function) is

exactly the same stuff that's involved with initializing a variable with

that expression (e.g. similar to the copy constructor issue below).

The function itself isn't perfect either - It doesn't handle (const) (rvalue) references so I believe it might not work in all cases, so that might need some fixing.

Also, having to duplicate the initializer in the assert statement is a big downside - If I now change the variable initializer, but forget to update the assert statement, all bets are off...

Using constexpr constant

As Andrzej pointed out in his post, you can mark variables with

constexpr, which requires them to be constant initialized. However,

this also makes the variable const, meaning it cannot be changed after

initialization, which we do not want. However, we can still leverage this

using a two-step initialization:

constexpr Foo c_init = Foo(1); // OK

Foo c = c_init;

constexpr Foo d_init = Foo(1L); // Error

Foo d = d_init;

This isn't very pretty either, but at least the initializer is only defined once. This does introduce an extra copy of the object. With the default (implicit) copy constructor this copy will be optimized out and constant initialization still happens as expected, so no problem there.

However, with user-defined copy constructors, things are diffrent:

class Foo2 {

public:

constexpr Foo2(int x) { }

Foo2(long x) { }

Foo2(const Foo2&) { }

};

constexpr Foo2 e_init = Foo2(1); // OK

Foo2 e = e_init; // Not constant initialized but no error!

Here, a user-defined copy constructor is present that is not declared

with constexpr. This results in e being not constant-initialized,

even though e_init is (this is actually slighly weird - I would expect

the initialization syntax I used to also call the copy constructor when

initializing e_init, but perhaps that one is optimized out by gcc in

an even earlier stage).

We can user our earlier ensure_const_init function here:

constexpr Foo f_init = Foo(1);

Foo f = f_init;

static_assert(ensure_const_init(f_init), "f not const-initialized"); // OK

constexpr Foo2 g_init = Foo2(1);

Foo2 g = g_init;

static_assert(ensure_const_init(g_init), "g not const-initialized"); // Error

This code is actually a bit silly - of course f_init and g_init are

const-initialized, they are declared constexpr. I initially tried this

separate init variable approach before I realized I could (need to,

actually) add constexpr to the init variables. However, this silly

code does catch our problem with the copy constructor. This is just a

side effect of the fact that the copy constructor is called when the

init variables are passed to the ensure_const_init function.

Using two variables

One variant of the above would be to simply define two objects: the one you want, and an identical constexpr version:

Foo h = Foo(1);

constexpr Foo h_const = Foo(1);

It should be reasonable to assume that if h_const can be

const-initialized, and h uses the same constructor and arguments, that

h will be const-initialized as well (though again, no real guarantee).

This assumes that the h_const object, being unused, will be optimized

away. Since it is constexpr, we can also be sure that there are no

constructor side effects that will linger, so at worst this wastes a bit

of memory if the compiler does not optimize it.

Again, this requires duplication of the constructor arguments, which can be error-prone.

Summary

There's two significant problems left:

None of these approaches actually guarantee that const-initialization happens. It seems they catch the most common problem: Having a non-

constexprfunction or constructor involved, but inside the C++ minefield that is (copy) constructors, implicit conversions, half a dozen of initialization methods, etc., I'm pretty confident that there are other caveats we're missing here.None of these approaches are very pretty. Ideally, you'd just write something like:

constinit Foo f = Foo(1);or, slightly worse:

Foo f = constinit(Foo(1));

Implementing the second syntax seems to be impossible using a function -

function parameters cannot be used in a constant expression (they could

be non-const). You can't mark parameters as constexpr either.

I considered to use a preprocessor macro to implement this. A macro

can easily take care of duplicating the initialization value (and since

we're enforcing constant initialization, there's no side effects to

worry about). It's tricky, though, since you can't just put a

static_assert statement, or additional constexpr variable

declaration inside a variable initialization. I considered using a

C++11 lambda expression for that, but those can only contain a

single return statement and nothing else (unless they return void) and

cannot be declared constexpr...

Perhaps a macro that completely generates the variable declaration and

initialization could work, but still a single macro that generates

multiple statement is messy (and the usual do {...} while(0) approach

doesn't work in global scope. It's also not very nice...

Any other suggestions?

Update 2020-11-06: It seems that C++20 has introduced a new

keyword, constinit to do exactly this: Require that at variable is

constant-initialized, without also making it const like constexpr

does. See https://en.cppreference.com/w/cpp/language/constinit

For anyone that cares: I just replaced my GPG (Gnu Privacy Guard) key that I use for signing my emails and Debian uploads.

My previous key was already 9 years old and used a 1024-bit DSA key. That seemed like a good idea at the time, but for some time these small keys and signatures using SHA-1 have been considered weak and their use is discouraged. By the end of this year, Debian will be actively removing the weak keys from their keyring, so about time I got a stronger key as well (not sure why I didn't act on this before, perhaps it got lost on a TODO list somewhere).

In any case, my new key has ID A1565658 and fingerprint E7D0 C6A7

5BEE 6D84 D638 F60A 3798 AF15 A156 5658. It can be downloaded from

the keyservers, or from my own webserver (the latter includes

my old key for transitioning).

Now, I should find some Debian Developers to meet in person and sign my key. Should have taken care of this before T-Dose last year...

When working with an Arduino, you often want the serial console to stay open, for debugging. However, while you have the serial console open, uploading will not work (because the upload relies on the DTR pin going from high to low, which happens when opening up the serial port, but not if it's already open). The official IDE includes a serial console, which automatically closes when you start an upload (and once this pullrequest is merged, automatically reopens it again).

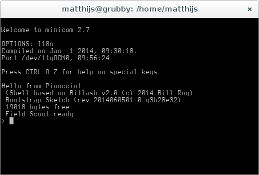

However, of course I'm not using the GUI serial console in the IDE, but minicom, a text-only serial console I can run inside my screen. Since the IDE (which I do use for compiling uploading, by calling it on the commandline using a Makefile - I still use vim for editing) does not know about my running minicom, uploading breaks.

I fixed this using some clever shell scripting and signal-passing. I

have an arduinoconsole script (that you can pass the port number to

open - pass 0 for /dev/ttyACM0) that opens up the serial console, and

when the console terminates, it is restarted when you press enter, or a

proper signal is received.

The other side of this is the Makefile I'm using, which kills the serial console before uploading and sends the restart signal after uploading. This means that usually the serial console is already open again before I switch to it (or, I can switch to it while still uploading and I'll know uploading is done because my serial console opens again).

For convenience, I pushed my scripts to a github repository, which makes it easy to keep them up-to-date too:

While setting up Tika, I stumbled upon a fairly unlikely corner case in the Linux kernel networking code, that prevented some of my packets from being delivered at the right place. After quite some digging through debug logs and kernel source code, I found the cause of this problem in the way the bridge module handles netfilter and iptables.

Just in case someone else actually finds himself in this situation and actually manages to find this blogpost, I'll detail my setup, the problem and it solution here.